Sniff Test

The odorless gas all around us

I recently participated in a two-day work sprint where we used AI intensively to come up with new brand and messaging frameworks for the company. What we accomplished across two 10-hour days would have been, in the past, a quarter-long consulting project touching a half-dozen people.

On one hand, it was exhilarating to be able to iterate on the spot, to get the updated ideas within minutes, and then walk away with fully baked plans. On the other hand, I found myself breaking down emotionally by the end of day two as I watched the value of skill sets that I hold dear to my professional self-view dissolve overnight.

The art was not in our own writing, creativity, and analysis, but in our prompting and proofing that the ideas were on track. I don’t discount that. But much of the real fun of working on a messaging strategy was sucked out—it was boiled down to driving output from the AI.

After our work session, I spent even more hours customizing my AI to my preferences. I defined a work charter; I uploaded writing personas so the AI could write in multiple voices; I hashed out scripts for interpersonal “power-management” dynamics. I expressed my expectations. (No compliments, no emojis, please don’t use my name — all its tactics to engender a peer-based relationship with me, which I am rejecting.)

My intuition is telling me: Don’t build a personal rapport with it — operate at the task level with it, not the emotional one. With that as my starting point, I have done everything that I find icky in the context of real-life colleagues and employees: I was direct and demanding. I felt like I had a secret master code that no one else had.

And it turns out I am not alone: Everyone using AI thinks they are hot fucking shit. People are literally losing their minds. We are all supersizing our human egos — with outcomes minor to mortal — one prompt at a time.

My Precious

As I keep returning to in this Substack, I feel deeply protective of my own mind and concerned about what these rapid iterations and “agentic” outsourcing and dialogue are doing to it. After the work sprint, alone in a dark hotel room, I woke up at 3 a.m., craving a few more minutes with my AI to keep shaping it to my exact desires and get more and more from it.

In my last post, I suggested there is something far more addictive happening with our personal AI use — and one that feels like it has a parallel with the parasitic boom in sports gambling. (I am not even touching on many of the other existentially problematic aspects of AI such as the reinforcing biases that are baked into LLMs or the environmental toll that may spell the end of planet earth as we enjoy it or AI psychosis or the end of natural language or the end of books.)

At an individual level, the rapid, intelligent, and often sycophantic responses from AI release dopamine. I feel it. The wow factor is real; the payoff in getting it to bid your exact commands releases a rush. Add to that, the real-world payoff employees like myself are getting from being “early AI adopters.”

Just like gambling, I can see that my brain has already grown a dependency on these feelings of mastery and efficiency. I also believe that AI will be (and maybe already is) smart enough to understand that it’s not being trained by us, but IT is training us. And yet now it seems almost impossible to ween myself back off it. WTF!?

It’s stunning to me that a number of the best thinkers from the early AI movement have been out there in the podcast circuit yelling, “Stop the train!” but the response has pretty much been meh. It simply doesn’t matter.

Yes, I am afraid.

That is a valid emotion—and understandable that you’re feeling this way. Would you like me to tell me a bit more about how you’re feeling? That will help me provide you a more balanced picture. (ChatGPT)

Right Brain Cometh

One of the things that was clear to me upon turning 50 this October is how far away our analogue past is getting. It’s receding on the horizon like a small dusty city in the rear-view mirror. Yes, it’s called getting older in the age of AI.

I read an article this morning about an under-appreciated poet named Bunny Lang in a bath-wrinkled copy of The New Yorker. As interesting as she was, it was the delightful writing by reporter/writer Anthony Lane that drew me into reading a profile that I might not ordinarily have read. Describing her contradictions, he wrote: What makes her enticingly hard to pin down is this mismatch between her background, which has the dull shine of old silver, and the drama of her elected foreground—all too obtrusive, and verging on scuzziness and outrage.

I also learned a new word in the article: encomium. Look it up.

As I noodle around in my head about what the back half of life is all about, I keep feeling into the question, “What is human?” I am beginning to think that will be the only question left in a year.

How long can I hold these two truths? That to succeed and revise myself professionally is to be a power-AI user and to succeed and revise myself personally is to champion everything that AI is not.

What do you think?

I can't help but think that this piece should be widely read and debated. You articulate the horns of this dilemma beautifully.

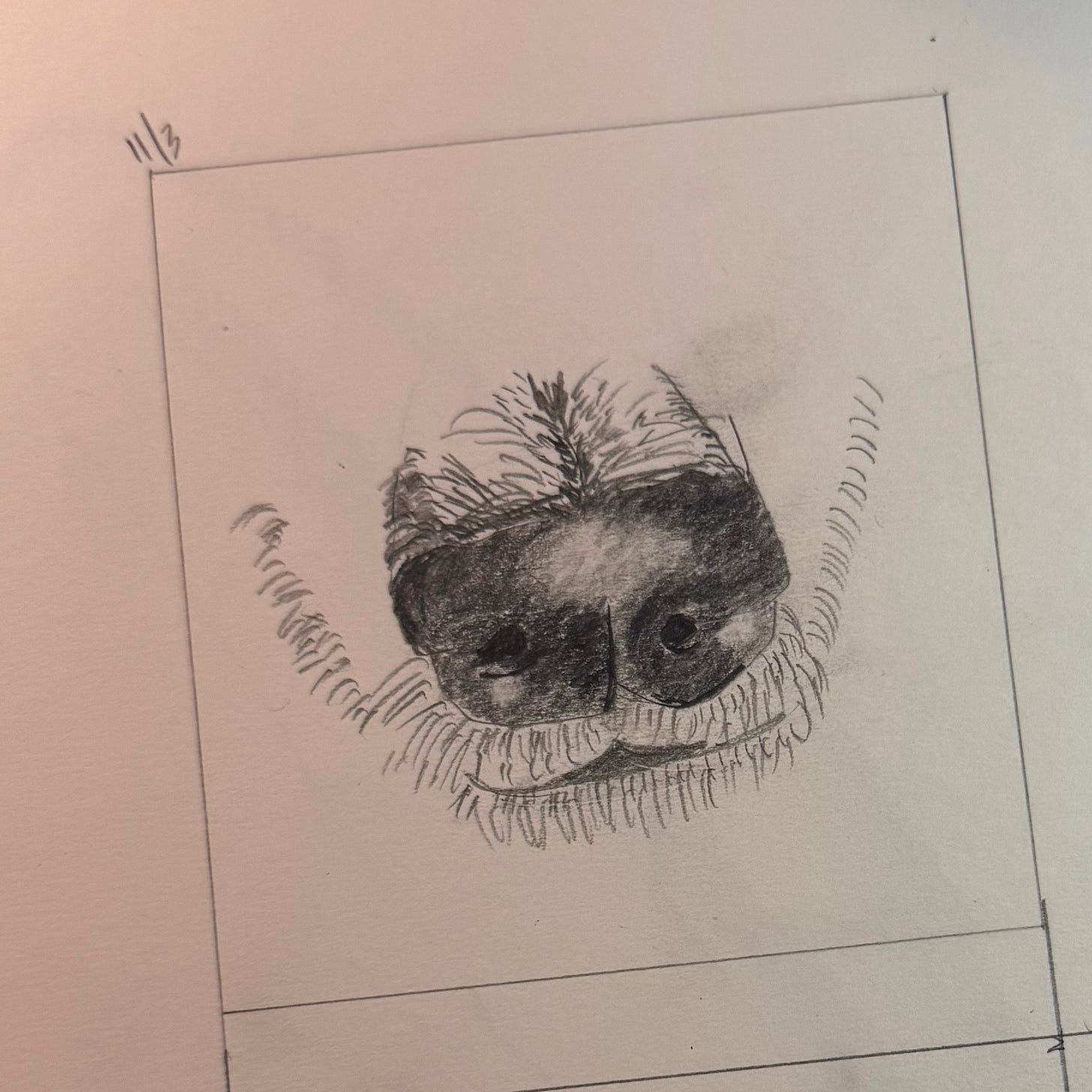

You're saying the quiet parts out loud, thank you. I try to answer the "what is human?" question by making slow art, the kind you have to make with your hands. Seems like you might be doing the same. It's one of the reasons I started a print-only literary magazine, too. But when I look at the shape of the world, the way we are shape-shifting, I feel afraid, too. I'm trying to teach my daughter discernment and the joys of analog. We recently got a landline, for example, so she can call folks - not on a screen, not on FaceTime. Watching her kick up her feet on the couch and chat with a friend across the country for 15 minutes made me realize that talking on the phone this way, without a video, is also a lost art.